|

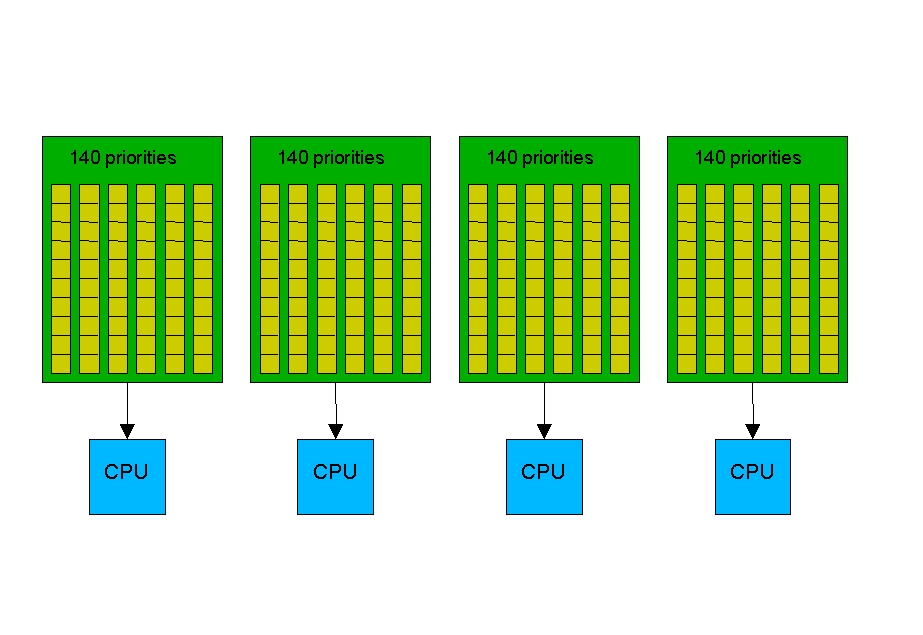

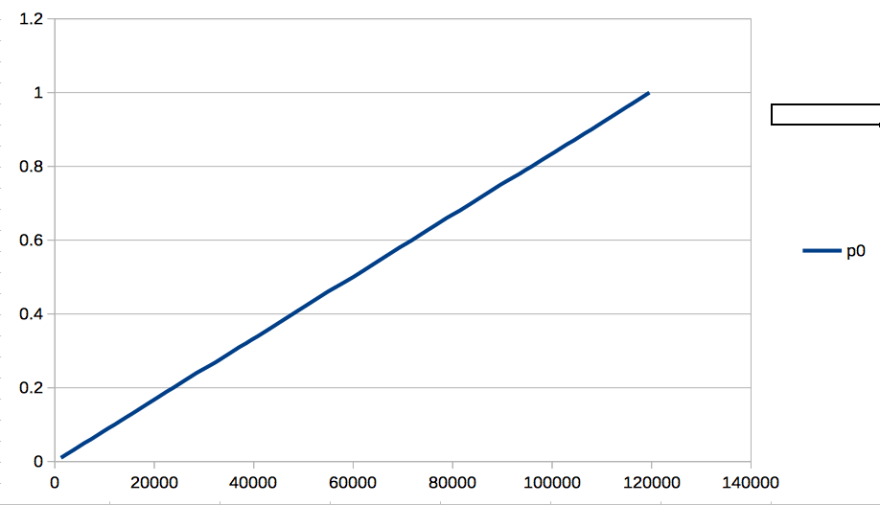

Instead, it picks that task from the active queue having the highest priority. To decide which process to run next, the scheduler does not navigate through the entire queue. The active and expired queues are swapped when the former becomes empty. When a task finishes its time slice, it's inserted into the expired queue in sorted order of priority. The algorithm uses two run queues made up of 140 priority lists: an active queue that holds tasks that have time slices left and an expired queue that contains processes whose time slices have expired. The following are some of the important features of the O(1) scheduler: Look at the sidebar " Highlights of the O(1) Scheduler " to find out some of its important features. Paradoxically, to achieve fast response times, lazy tasks get incentives from the O(1) scheduler, while studious ones draw flak. I/O-bound tasks are often sleep-waiting for device I/O, while CPU-bound ones are workaholics addicted to the processor. Processes are of two kinds: I/O bound and CPU bound. In addition to being super-scalable, the scheduler has built-in heuristics to improve user responsiveness by providing preferential treatment to tasks involved in I/O activity. The 2.6 scheduler replaced the O(n) algorithm with an O(1) method. Time consumed by an O(n) algorithm depends linearly on the size of its input, and an O(n 2) solution depends quadratically on the length of its input, but an O(1) technique is independent of the input and thus scales well. The 2.4 algorithm also didn't work very well on SMP systems. On a system running at high loads, this translated to significant overhead. In other words, it used O(n) time, where n is the number of active tasks. The time consumed by the algorithm thus increased linearly with the number of contending tasks in the system. In the 2.4 and earlier days, the scheduler used to recalculate scheduling parameters of each task before taking its pick. To see how you can minimize the impact of the former hurdle, let's dip into recent Linux schedulers and understand their underlying philosophy. In user space, indeterminism due to scheduling and paging often come in the way of fast response times, however. Many user mode drivers need to perform some work in a time-bound manner. The new process scheduler offers huge response-time benefits to user mode code, so let's start with that. The 2.6 kernel overhauled a subsystem that is of special interest to user space drivers. An application is considered to be a user mode driver if it's a candidate for being implemented inside the kernel, too. In this chapter, the term user space driver (or user mode driver ) is used in a generic sense that does not strictly conform to the semantics of a driver implied thus far in the book. The testing cycle is faster, and it's easier to traverse all possible code paths and ensure that they are clean. But even when a kernel driver is the appropriate solution, it's a good idea to model and test as much code as you can in user space before moving it to kernel space.

Many important device classes, such as storage media and network adapters, cannot be driven from user land. That said, you can't accomplish too much from user space.

Floating-point unit (FPU) instructions can, however, be used from user space. You can't easily do floating-point arithmetic inside the kernel. If the rest of the kernel needs to invoke your code's services, it's a candidate for staying inside the kernel. If much of what your code does can be construed as policy, user land might be its logical residence. If your code needs the services of kernel APIs, access to kernel variables, or is intertwined with interrupt handling, it has a strong case for being in kernel space. If you have time-critical performance requirements, stay inside the kernel. If you have to talk to a large number of slow devices and if performance requirements are modest, explore the possibility of implementing the drivers in user space. What can be done in user space should probably stay in user space. Here are some rules of thumb to help decide whether your driver should reside in user space:Īpply the possibility test. Some user mode drivers will even work across operating systems if the device subsystem enjoys the services of a standard user-space programming library. You won't have to reboot the system every time you dereference a dangling pointer. In spite of the inclement weather in user land, user mode drivers enjoy certain advantages. Several kernel subsystems, such as SCSI, USB, and I 2 C, offer some level of support for user mode drivers, so you might be able to control those devices without writing a single line of kernel code. Most device drivers prefer to lead a privileged life inside the kernel, but some are at home in the indeterministic world outside.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed